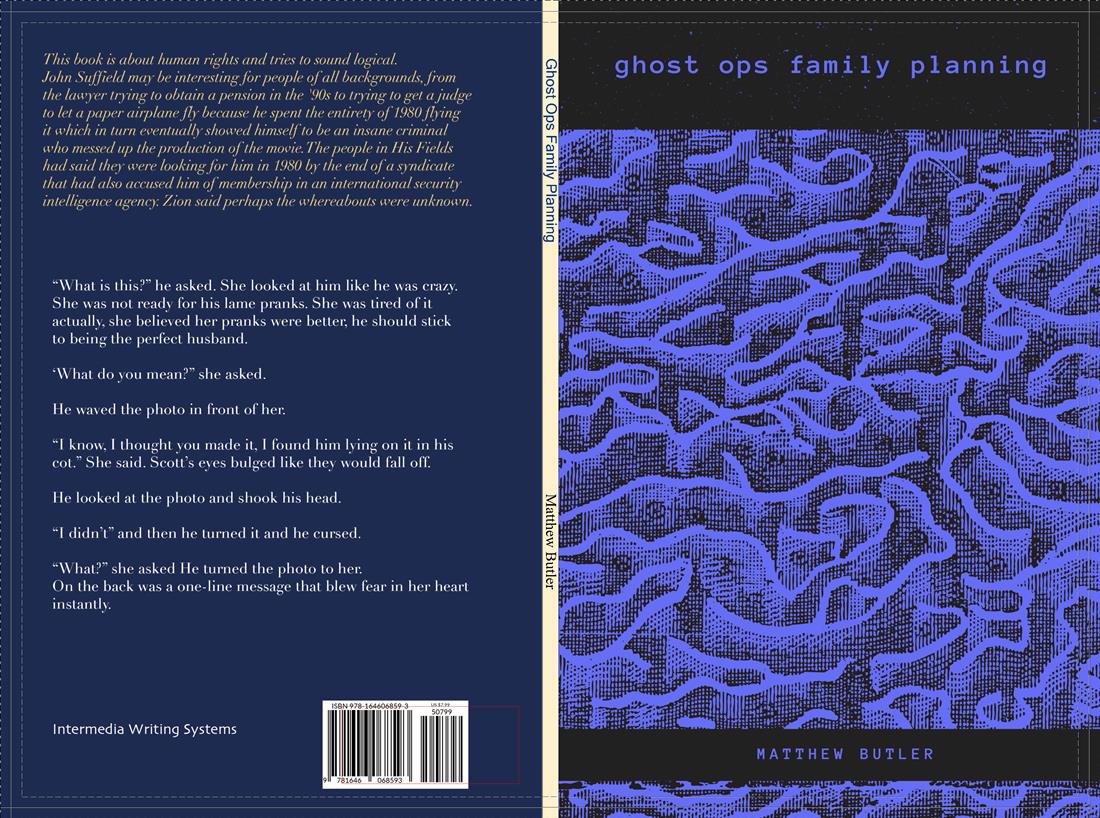

Ghost Ops Family Planning

For almost 20 years I’ve been generating books with various pieces of software. I’ve published most of these as short run paperback books as Intermedia Writing Systems, a very small press in Iowa City. From Babble! to Open Wound, it’s been fun to see the mangled hodge·podge of text in these books. In February of this year, OpenAI released GPT-2, a sophisticated language model that generates very realistic looking text that for the most part is semantically and syntactically correct. Using GPT-2, I have generated a short synthetic novella (though taken full authorial credit, of course) about a man and woman who seem to be subjected to government mind-control techniques… I think. Read it and decide for yourself!

Because I couldn’t generate the entire book at once and have it hold together with a plot, I created a page at a time, with the ending of one page as the model’s input text for the next. “Prompting”, as it eventually came to be called in the field, is a unique writing skill on it’s own, somewhere between creative writing and engineering. Sometimes I took a single sentence and other times I used an entire paragraph, it all depended on if I needed to pick up names or other important details. I then did standard copy editing on the entire text. I manually created paragraphs since the raw output had none and in some cases I changed proper names for consistency.